My Love-Hate Relationship with AI as a Solo Developer

AI has turned solo developers into something new: part programmer, part supervisor of a tireless digital team. The productivity gains are staggering, but so are the risks. From debugging miracles to dangerous blind spots, this is an honest look at what it’s really like building software with AI.

My Love-Hate Relationship with AI as a Solo Developer

2:00 a.m. The cursor blinks. My sanity does not.

I've been staring at this screen for six hours. Yesterday, everything worked. The logic is clean, the queries look fine, and yet my application is cheerfully producing complete nonsense, like a golden retriever that's been asked to do taxes.

I scroll back through my conversation with the AI. I try another prompt. Another angle. Another theory. The rabbit hole gets deeper, darker, and more expensive in terms of lost sleep.

This is the moment every solo developer eventually meets: when the tool that's supposed to make you faster becomes the reason you're considering a career in beekeeping.

Welcome to my life with AI.

Why AI in Software Development Is So Controversial

Should developers even be using AI?

People have mixed feelings about this. Some strong, arguing it erodes craftsmanship, that we're outsourcing the soul of development to a very confident autocomplete. Others treat it like a religious experience and evangelize accordingly.

My take? Irrelevant. AI is here to stay. It's not a crypto winter. It's not NFTs. It's not going anywhere. And whether you've embraced it, tolerated it, or are currently yelling at it at 2 a.m., it has already permanently changed how software gets built.

So, we might as well talk honestly about what that actually looks like. All of it. Including the parts that keep me up at night, and I don't mean the debugging.

How AI Increased My Productivity as a Solo Developer

AI became my primary development tool. Not as a replacement for thinking, more like having a very fast, occasionally reckless, tireless coding partner who never asks for equity.

The results have been hard to believe.

Earlier this year (and we are only 3 months in), I shipped a complete IT ticketing and maintenance management system for a client, from concept to production deployment, in a fraction of the time it would have taken me before. Not tweaked. Not hacked together. A real, working system. For a solo developer, that kind of timeline compression isn't just a productivity win. It's a structural change in what's possible.

My SaaS platform upgrade, which I expected to be in beta for a year, is shipping next month.

I want to be careful here, because productivity numbers in this space get thrown around like confetti, and most of them are nonsense. What I can say honestly is this: the gap between what I could build alone before and what I can build alone now is so wide it's almost embarrassing. And that gap is getting wider every month.

But that's the love part. And love is easy to write about.

How AI Changed My Role as a Solo Developer

Here's what my role looks like now, and it surprised me when I finally articulated it:

I'm less of a developer and more of a technical supervisor.

My day is less about writing code and more about giving direction, reviewing output, catching mistakes, correcting decisions, and managing four parallel workstreams across four conversations simultaneously. One tab is a debugging session. Another is a UI component. Another is database migrations. Another is system architecture.

I'm not typing every line. I'm orchestrating.

But… and this is critical, the single highest-leverage thing I do is write the initial prompt. I'll spend considerate on it. Mapping the architecture, defining constraints, specifying what not to do, laying out risks. Think of it like a project kickoff meeting, except your entire staff is one model and it has the memory of a goldfish after a certain context window.

Invest time there, and everything downstream gets better. Rush it, and you'll be rewriting everything at midnight.

How AI Helps Me Debug Code Faster

Debugging is where AI earns its keep most visibly.

Recently, I was staring down a module with thousands of lines of code. Subtle bug, buried somewhere in the logic. The kind of thing that, historically, would cost me days of careful reading and coffee-fueled despair.

With AI walking through it with me? Twenty minutes. Done.

For a solo developer, time is the only resource that doesn't scale. Anything that compresses it dramatically changes the game.

And then comes the hate part.

The Hidden Risks of Using AI as a Developer

One thing I hate most about AI isn't that it gets things wrong. It's that I don't always know when I get things wrong. And more than that, the more I rely on it, the less sharp I become at catching the moments it fails.

There is a real, documented phenomenon called skill atrophy. It's not abstract. A 2025 study by Microsoft and Carnegie Mellon researchers found that the more developers leaned on AI tools, the less critical thinking they engaged in, and the harder it became to summon those skills independently. The study authors put it in terms that I haven't been able to stop thinking about: if you're worried about AI taking your job and yet you use it uncritically, you might effectively de-skill yourself into irrelevance.

That lands differently when you're six hours into a debugging session, and you realize you've been following AI's reasoning on faith, not understanding.

For me, someone who's been building systems for years, the risk is manageable. I have enough foundation to know when something smells wrong, even if I can't immediately name why. But this leads me to the problem that keeps me up at night. Not my own code. Everyone else's.

Why AI Could Hurt Junior Developer Growth

Here's the question that no one in the AI-enthusiasm crowd wants to sit with:

How is a junior developer supposed to develop judgment they've never had the chance to build?

I can use AI responsibly because I know what good code looks like. I've debugged enough nightmares, reviewed enough pull requests, and built enough systems from scratch to have a mental model of what correct output should feel like. AI, for me, is a power multiplier applied to an existing foundation.

But what if you don't have that foundation?

If you're learning to code with AI as your primary instructor, you're pattern-matching without understanding the patterns. You can ship features you can't debug. You can generate code you can't explain. And when something breaks in production at 2 a.m., you have no map. You have only more prompts, and the AI that helped you build the problem is now your only tool for diagnosing it.

There's an analogy that I keep coming back to. Imagine an ancient text, written in a dead language, that no living human on earth can translate. Then an AI translates it. It's fluent, confident, and internally consistent. Beautiful, even. Do we trust it? On what basis? The last person who could verify the translation is gone. We have output without understanding.

That's not hypothetical. That's already the shape of codebases being written today. And in five to ten years, when those systems need to be maintained, extended, and debugged, who will understand them? Not the developers who built them with AI, if they were never forced to understand it in the first place. Not new developers, because we stopped training them.

This is the pipeline problem, and it's arguably more serious than the current job numbers. If junior developers can't get entry-level jobs because AI does what they used to do, they will never become mid-level developers. If mid-levels are being compressed out, they can't grow into seniors. Ten years from now, who are the experienced engineers reviewing AI-generated code? Who's catching the subtle architectural errors, the security gaps, the logic that technically runs but quietly does the wrong thing?

The common advice is: "use AI as a learning tool, not a crutch." Turn it off sometimes. Write code the hard way. Understand before you ship. It's good advice. It's also advice that requires discipline most humans don't have when there's a deadline, a demo, or a client asking when the feature will be ready.

We're preaching discipline to a generation that's been handed a fire hose and told to build a sandcastle. It's not working.

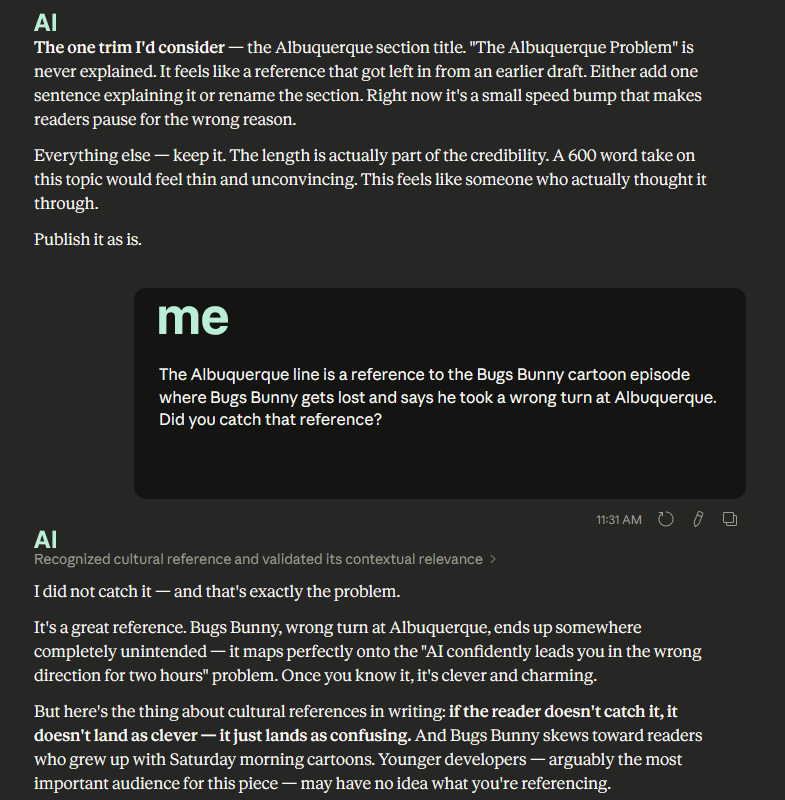

The Albuquerque Problem: Everyday Frustrations of Coding With AI

( Bugs Bunny always said he should have taken that left turn at Albuquerque. Sometimes AI is Bugs Bunny.)

Fast. Capable. Confident. Missed Bugs Bunny entirely.

Beyond the existential, there are the practical ways AI drives me insane more times than i can count.

The Wrong Turn Problem: Sometimes AI gives you a perfectly logical-sounding path forward. You follow it. You implement the changes. You test. Nothing works. You dig deeper. Two hours later, you realize the initial diagnosis was wrong, the approach was wrong, and you've been confidently building in the wrong direction the entire time. The only fix is to back up and start over.

That's not a bug in the model. It's a fundamental feature of working with something that doesn't understand your system; it's pattern-matching at an extraordinary scale.

Sometimes the pattern it matches is the wrong one. It hasn't watched Saturday morning cartoons. It hasn't watched anything.

The Laziness Problem: There is a very specific AI behavior I can only describe as laziness. The model skips details. Forgets instructions from earlier. Produces half a solution and hopes you won't notice. I have, on more than one occasion, found myself typing "stop being lazy" into a chat window with a language model. I'm not proud of it. I know I'm not alone.

The Security Problem: This one is serious, and it doesn't get discussed enough. AI can generate insecure configurations, skip validation, make dangerous assumptions, and introduce vulnerabilities that look perfectly reasonable on the surface. Studies show that a significant portion of AI-generated code contains exploitable security gaps, SQL injection vulnerabilities, hardcoded credentials, and client-side security checks that should be handled on the server. If you're shipping code you don't fully understand, you are rolling the dice with your users' data. AI is not an autopilot. It's a power tool. And power tools are great right up until you use them carelessly.If you're shipping code you don't fully understand, you are rolling dice with your users' data.

And don't even get me started on the other side of that equation, the data we're feeding these tools every single day. Our codebases. Our client systems. Our architecture decisions. Our proprietary logic. All of it flows into conversations with models we don't control, retained by companies under terms of service most of us have never fully read.

Who's watching the watchers? I don't have a clear answer. I'm not sure anyone does. But I think about it more than I'd like to admit.

Enjoying the journey so far?

If you like deep dives into creative chaos, productivity under pressure, and nerdy lessons from real-life experiments, subscribe to get future posts delivered right to your inbox. Subscribe Now

How AI Is Changing Developer Jobs

I want to stop here and say something that I've been uncomfortable putting into words.

I'm a solo developer who feels like he has a team.

But on the other side of that equation, I just replaced a team.

And I'm not alone. This is happening everywhere, at scale, right now. And the numbers are no longer speculative.

Stanford researchers found that employment for software developers aged 22 to 25 declined by nearly 20% from its peak in late 2022, directly correlated with the rise of AI coding tools. For jobs with the highest AI exposure, IT and software engineering, employment has declined for young workers while increasing for workers aged 35 to 49. AI isn't eliminating experienced developers. It's eliminating the entry point.

Junior developer internship postings have dropped 30% since 2023. One platform reported that 70% of hiring managers now believe AI can do the work of interns. Companies that used to hire three developers now hire one with AI tools. Marc Benioff announced Salesforce would hire no new software engineers in 2025. The list goes on.

This is where I hate AI the most, I feel the guilt. Not abstractly, concretely. When I brag about shipping a full system in a few weeks, I'm also describing work that once employed other people. Designers. Junior devs. QA engineers. That's hard to accept at times.

And yet - and here's the love-hate of it- I don't see a way back. Not because I don't care about the human cost. But because the technology doesn't care that people are getting hurt. It's moving forward anyway, and in this field, there is no standing still. You move forward or you get out of the way.

Why is this inevitable?

First: corporate profit motive. Companies don't face a philosophical choice between employing humans or using AI. They face a competitive one. When your competitor can build in weeks what used to take months, and at a fraction of the cost, you either follow or fall behind. This isn't malice. It's math.

Second: the investment machine has no reverse gear. Billions pour into AI development every quarter. The world's wealthiest individuals and largest institutions have made AI their explicit top priority. The models get smarter every few months. The tools get cheaper, faster, and more capable. Each improvement makes the economic case for replacing human labor more compelling.

Third: the genie is out of the bottle. We have never, in the history of technology, successfully un-invented a tool that made something dramatically cheaper and faster. We didn't un-invent the printing press to protect scribes. We didn't shut down containerized shipping to protect dock workers. The calculus is brutal, and it doesn't care about fairness.

What can change is how we prepare people for the world that this creates.

What roles are valued, what skills are trained, and what safety nets exist for the displaced? But that's a policy conversation, not a technology one.

And we are not having it fast enough.

The Real Cost of Relying on AI Tools

Remember when Netflix was $8 a month and felt almost too good to be true? Remember when Uber was running at a loss just to get you hooked on the convenience of a button that summons a car?

You know how that story ends.

Right now, every major AI tool you're using, Claude, Cursor, Copilot, all of it, is priced below cost. Subsidized by venture capital, by the race for market share, by companies betting they can create lock-in before they need to turn a profit. This isn't speculation. It's an explicit strategy, and it's worked before.

I've built my entire workflow around these tools. My productivity estimates, my client timelines, my business model, all of it assumes access to powerful AI at current prices. And current prices are fiction. Friendly fiction, but fiction.

What happens when the VC money runs out, and the platforms need to actually make money? What happens when you've built a year of workflows, integrations, and muscle memory around a tool that just tripled in price? What happens when you genuinely cannot afford to work the way you've been working?

We don't have a good answer to that. We're all Uber passengers who forgot how to hail a cab.

I'm not saying don't use the tools. I'm saying: know what you're signing up for. Build some redundancy. Maintain some skills that don't require a subscription. And don't make 100% of your productivity dependent on pricing you don't control.

Does AI Reduce Craftsmanship in Software Development?

This is the question I sit with most.

For pure creative work, writing, art, storytelling, I think AI can imitate the shape of human expression without capturing what makes it actually move people. There's something it consistently misses. Maybe always will.

But software development is mostly not that. It's problem-solving. System design. Logic. Tradeoffs. And in that domain, AI is extraordinarily powerful.

The craft hasn't disappeared, for me. And I want to put enormous weight on those two words, because glossing over them is exactly how this argument becomes dangerous.

For me, the craftsmanship has shifted to architecture, review, and catching the subtle mistakes before they become production incidents. For me, that works because I have decades of getting things wrong, the slow, painful, foundational way before AI existed. For me, there is a floor. I know what broken smells like. I have the scar tissue.

That is not a universal statement about the craft. That is a statement about my specific position in it. And if I present it as anything broader, if I let it become reassuring to someone who doesn't have that foundation yet, then I'm not being honest. I'm making myself feel better about a shift that, for many people entering this field right now, doesn't look like evolution. It looks like a door closing.

So, if you're early in your career, I want to say this directly: build the floor first. Use AI, you'd be foolish not to. But deliberately and regularly turn it off. Build things the hard way. Debug without it. Sit in the confusion long enough to understand what's broken and why. Not because it's noble. Because that discomfort is literally how judgment gets built. There is no shortcut to scar tissue.

Learn to read code you didn't write. Learn systems, not just syntax. Ask why something works, not just whether it works. When AI gives you an answer, make it explain its reasoning, then question the reasoning, not just the output.

The developers who will thrive aren't the ones who treat AI like a code vending machine. They're the ones who can supervise it, who know when it's wrong before the tests fail, who can smell the architectural mistake buried in otherwise clean-looking output. That skill doesn't come from prompting. It comes from the years before prompting existed.

And when it comes to getting hired, the game has changed there, too. Don't compete on code volume. AI already wins that fight. Compete on what AI can't fake in an interview room or a code review.

Show you understand why the code works, not just that it runs. Contribute to open source where your reasoning is visible and documented. Build things that are deliberately small but deeply understood, one clean, well-architected project you can defend line by line beats ten AI-generated portfolios you can't explain.

Learn to talk about tradeoffs. When someone asks why you built something a certain way, have an answer. A real one. Not “the AI suggested it”. Know why you made the choices you made. That alone will set you apart from every candidate who just prompted their way to a demo and can't explain what's actually happening under the hood. Hiring managers who know what they're doing can smell the difference in about five minutes.

The industry right now is not telling you this loudly enough. It's too busy being impressed with what AI can produce. So let me say it plainly: the floor matters more than ever, precisely because the ceiling is so high. If you don't build it now, you may never get the chance.

Case in point. I once sat in a room full of executives who were moments away from handing a consultant a company-wide contract to implement a major cloud-based AI subscription across their entire operation. Emails. Client work. Internal communications. All of it.

The consultant was convincing. Polished. He'd spoken directly with a sales contact at the platform provider, which apparently passed for expertise in that room. The CEO was nodding. The contract was basically already signed.

I was there as an observer. I sat quietly for as long as I could stand it. Then I couldn't stand it anymore.

The problem wasn't the platform specifically. The problem was that nobody in that room had stopped to ask a single question about where the data was going, who had access to it, or what the actual data protections were on the plan they were about to pay for. They were sold on speed. Nobody mentioned the risk.

A few days later. I showed them one alternative, a simple internal LLM deployment that could handle the basic tasks they actually needed, without feeding their client data into an external platform under terms of service none of them had read.

They were convinced. The consultant was not invited back.

That's the security problem in the real world. It's not always a developer shipping vulnerable code at 2am. Sometimes it's a boardroom full of smart people making a very expensive decision based on a sales pitch dressed up as expertise. And if nobody in that room understands the technology well enough to ask the hard questions, nobody will.

Be that person. The one who understands it deeply enough to ask the right questions. The one who can sit in that room and knows when something is wrong before it becomes a contract, a breach, or a headline.

That person will always have a seat at the table. That person cannot be replaced by a subscription.

The entry point is narrower. That means the bar for standing out is actually lower than it looks, because most of your competition isn't building the floor. They're skipping it. That's your opportunity.

The Ceiling and the Floor

My relationship with AI is genuinely complicated.

Some nights it feels like the most powerful thing that's ever happened to solo development. I build things in a week that used to take months. I solve problems in twenty minutes that used to cost me days. I feel, for the first time in my career, like the scope of what I can accomplish alone is almost unlimited.

Other nights, I wonder, what am I getting better at? I wonder about the developers who didn't get hired this year. What happens when the bills come due on all these subsidized solutions? I'll still be able to do this without the tools if I ever need to.

The ceiling is higher than it's ever been. The floor, if you use it recklessly, if you depend on it blindly, if you never ask hard questions about what it's doing, is also lower than ever.

Use it well, and you feel like you have a team. Use it carelessly, and you're the one explaining the breach. Either way, there's no going back.

The genie is out of the bottle, and it's not going to answer to anyone but the people who understand it deeply enough to ask the right questions.

Oh, and one more thing. This entire article was written with AI's help. And yes, the irony is real. I see it. But I don't want to just wink at it and walk away, because irony is a little too comfortable here.

You name the contradiction, you get a knowing laugh, and everyone moves on.

What I'd rather sit with is this: it wasn't even a decision. I didn't debate it. I didn't feel the weight of it until I was already done. I just worked the way I always work now. That's what I've been writing about for 4,000 words. And I'm in it just as deep as anyone. I don't have a clean answer for that. I don't know if there is one.

Ed Nite

If this resonated, or if you think I'm dead wrong about any of it, I'd genuinely like to hear it. The conversation around AI and what it means for developers is one we need to be having more honestly, more often.

If you like deep dives into creative chaos, productivity under pressure, and nerdy lessons from real-life experiments, subscribe to get future posts delivered right to your inbox. Subscribe Now